Reports

2024 Gartner® Emerging Tech: The Impact of AI and Deepfakes on Identity Verification

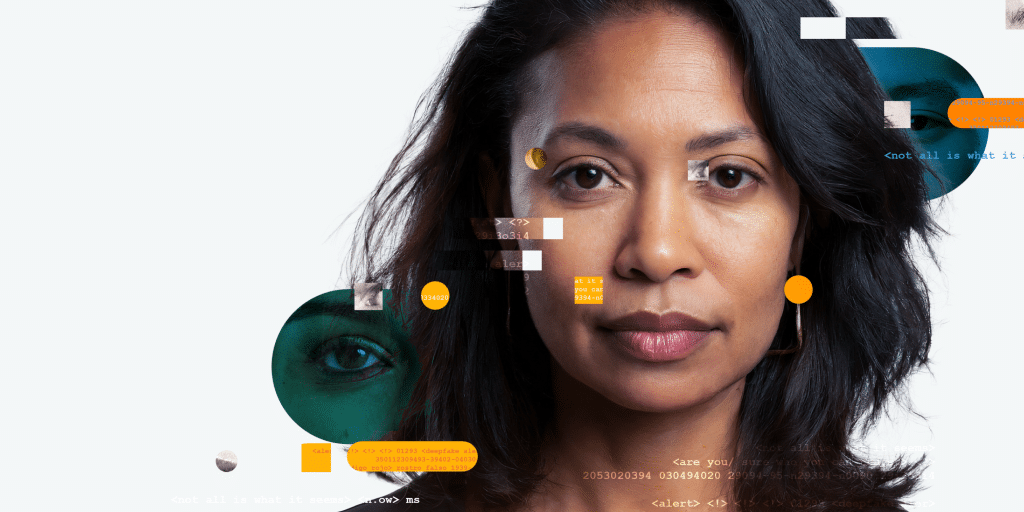

iProov Threat Intelligence Report 2024: The Impact of Generative AI on Remote Identity Verification

Identity Crisis in the Digital Age: Using Science-Based Biometrics to Combat Malicious Generative AI

Gartner Market Guide for User Authentication

Vox Pop: Getting Identity Right From the Start

Unlocking Financial Inclusion With Digital Identity and Biometric Verification

Stolen to Synthetic: The Evolution of Identity Fraud and the Need for Resilient Identity Verification

Demystifying Biometric Face Verification

Using Biometric Technology To Fight Public Sector Benefit Fraud

iProov Biometric Threat Intelligence eBook

Gartner® Buyer’s Guide for Identity Proofing

How Can Financial Institutions Safeguard Against Deepfakes: The New Frontier of Online Crime?

iProov Biometric Threat Intelligence Report

How Latin American Banks Can Safeguard Against Deepfakes: The New Frontier of Financial Crime

KuppingerCole Names iProov an Innovation Leader in 2022 Market Compass

Digital Identity Report: What Consumers Want and How Governments, Banks and Other Enterprises can Deliver

The New EU Digital Identity Wallet: Four Key Challenges and the Route to Success

Work From Clone: How Criminals Are Using Deepfakes for Job Applications

Gartner Innovation Insight for Biometric Authentication

Couch to Gate: How Enabling Document Check-From-Home Can Improve the Travel Experience and Deliver More Revenue

Del sofá a la puerta de embarque: Cómo mejorar la experiencia de viaje y generar mayores ingresos habilitando el registro de documentos desde casa

Governments Scaling Gains From Disruption: 2022 Insights from Gartner

Biometric Authentication for Identity Service Providers

Biometric Authentication for Government

Biometric Authentication for Financial Services

Industry Insight: Biometrics for Business

iProov Named a Gartner Cool Vendor: Get Your Copy

Online Face Verification in Insurance: 5 Ways To Enable Digital Transformation

Online Banking in Canada Report: Balancing Security with User Experience?

Digital Identity in the USA: What Do Americans Want From the DMV?

Case Studies in Government Digital Identity

Online Banking in the U.S. Part 2: Digital Account Access

Online Banking in the U.S. Part 1: Onboarding

The Threat of Deepfakes Report

Top Considerations for Online Onboarding in Financial Services

The End of the Password Report

Deepfakes: The Threat to Financial Services Report