November 21, 2019

Written by Andrew Bud, CEO & Founder

When we launched iProov in 2013, it seemed obvious to us that “replay attacks” would be amongst the most dangerous threats to face verification. These occur when an app, device, communications link or store is compromised and video imagery of a victim is stolen; the stolen imagery is subsequently used to impersonate a victim. Right from the start, we designed our system to be strongly resilient to this hazard. However, only now is the market beginning to understand the danger of replay attacks.

What is a replay attack and how can it be resisted?

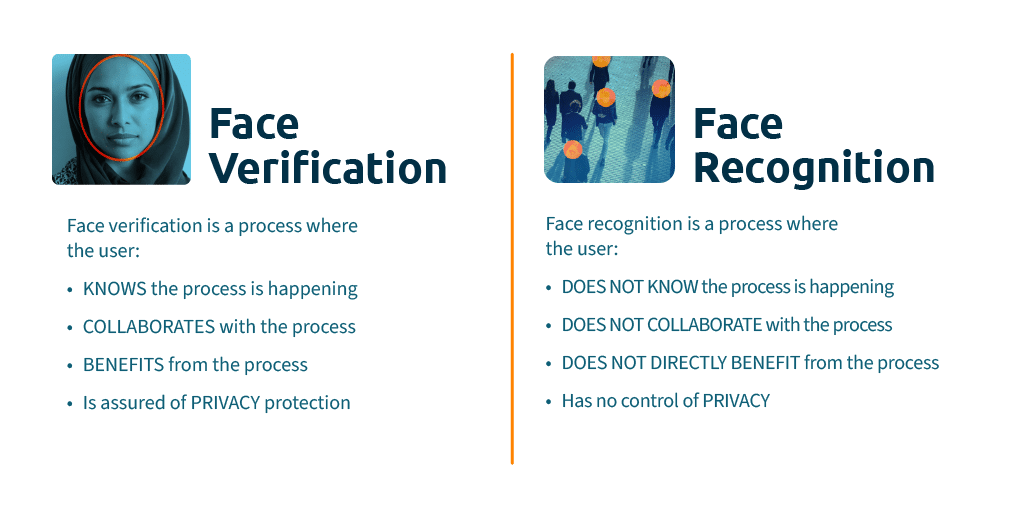

A dwindling number of people still believe that face recognition is the key to the security of face verification. It isn’t. In practical terms, it would be foolish of a criminal to try to impersonate a victim by trying to look like them – it is incredibly difficult to do and so unlikely to succeed that it is almost pointless. Since our faces are all public and easy to copy, it is far more effective to present imagery of the victim. Most industry protagonists still focus on artefact copies – photos, screen imagery (stills or videos) or masks. Lots of energy is spent on masks. Real-f Co., based in Japan, creates some of the most realistic masks available – the skin pore texture is perfect and even the tear-ducts glisten. Although they are visually compelling, such artworks can cost $10,000. Masks are not a scalable way to economically attack large numbers of victims. Of course, robust detection of masks is essential, but there are bigger dangers.

If an attacker can implant malware on a user device, for example by getting users to click on a rogue link, such malware can potentially gain access to the imagery captured by apps on the device. This is true of all apps, no matter how strongly they have been armoured. App hardening measures don’t block attacks, they simply increase the effort the attacker must invest to succeed. And if the prize is access to millions of devices, the business drivers to do so are compelling. This is why, at iProov, we never rely on the integrity of the device. Once stolen, the video will be replayed digitally into a malicious device, bypassing the camera and never appearing on a screen at all.

That’s why our core Flashmark technology makes every verification video unique. Flashmark illuminates the user’s face with a one-time sequence of colours from the device screen. The illuminated face is what we call a “one-time biometric”. Like a one-time passcode, the number sent by text message to authenticate to many secure services, it is obsolete as soon as it is used and is worthless if stolen.

Any malware or attack that attempts to steal a Flashmarked face video finds that it is totally useless – with the wrong colour sequence on the face, it is immediately detected and rejected. This same technology also provides the industry’s only strong defence against animated stills, synthetic videos and Deepfakes, a threat iProov has highlighted for several years.

The great advantage of this technology is that it is extremely usable. Other methods of replay defence destroy usability by bombarding the user with increasingly baffling instructions to move their head one way, then their phone another way, then recite numbers etc. Very often, they fail because users don’t do as they are told or because quite simply it is impossible to understand the instructions. iProov Flashmark is entirely passive – no action is required from the user, so transaction success rates are uniquely high.

The suggestion that user devices are impervious, or that mobile apps can be made incorruptible, is misleading and dangerous. We believe that at the heart of good biometric security lies the ability to detect and deflect attacks based on replayed stolen recordings and other digital imagery, directly injected into the dataflow. Anything less lets down enterprises and their users.